What a GPU Is and Why It Differs From a CPU

A GPU generally specializes in executing vast numbers of calculations simultaneously. It was originally designed to render images for computer displays, but has gone on to become a computing engine and active device for all applications from AI model training to simulating climate.

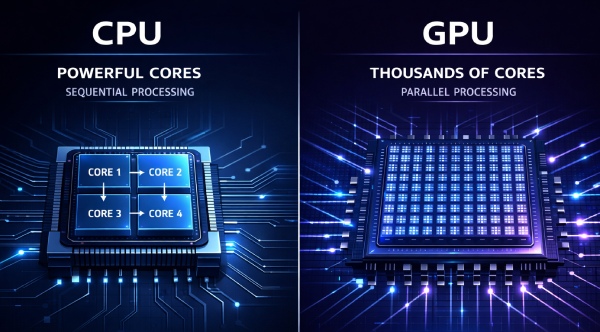

The difference between a GPU and a CPU comes down to architecture. A central processing unit typically contains between 8 and 64 high-performance cores, each optimized to execute complex instructions in sequence, fast. A modern GPU, by contrast, can contain thousands of smaller cores built to run many simpler calculations at the same time. Think of a CPU as a team of a dozen expert surgeons, each capable of intricate, precise work. A GPU is closer to a factory floor with thousands of workers, each handling one straightforward task, all at once.

That parallel structure makes GPUs extraordinarily effective for workloads that can be broken into many simultaneous operations. Rendering a 3D scene, training a neural network, or processing a 4K video frame all involve millions of repetitive mathematical operations. A CPU would handle these sequentially; a GPU tears through them in parallel.

How GPU Power Shows Up in Everyday Work and Industry

Across millions of desktops, workstations, and data centers, GPUs are quietly doing work that most people never think about.

Gaming, Video Production, and Creative Workflows

The most prevalent example for consumers is gaming. For example, Cyberpunk 2077 contains real-time ray-tracing technology that calculates all the light physics frame by frame for 60 frames or more per second. Without GPU parallel processing translating into raw visuals, there would not be anywhere near this level of fidelity. 4K or 8K editing apps all work in the same way. Premiere Pro and Resolve do the imports and color grading on the GPU, cutting export times from hours to minutes.

A 3D artist or a creator in animation depends highly on GPU acceleration for the quick and real-time viewport previews. Architects using software like Autodesk Revit or Blender, a program for designing real buildings, can check out the entire model of a building at different perspectives without rendering for long hours. Such an option itself influences the way people come up with designs.

Industrial and Scientific Workflows

Beyond consumer software, the industrial applications are substantial. Radiologists use GPU-accelerated imaging tools to process MRI and CT scans faster, with AI models trained on GPU clusters helping flag anomalies that might be missed under time pressure. Financial firms run Monte Carlo simulations across thousands of variables simultaneously to model portfolio risk. Engineers at automotive companies simulate crash dynamics and aerodynamic drag digitally before a single physical prototype is built.

Scientific research has its own dependencies. Climate modeling, protein folding analysis, and particle physics simulations all require the kind of throughput only GPU clusters can provide. The scale of data involved makes CPU-only approaches impractical.

How GPU Computing Powers AI, Simulation, and Modern Digital Systems

Parallel processing is what separates GPU workloads from everything a standard CPU handles well. Where a CPU works through tasks sequentially using a handful of powerful cores, a GPU runs thousands of smaller operations simultaneously. That difference matters enormously when the task involves processing millions of data points at once.

From Training to Real-Time Inference

Training a deep learning model means feeding enormous datasets through layered mathematical functions, adjusting billions of parameters until the model learns to recognize patterns. Inference is the next step - taking that trained model and using it to make real-time predictions. Both stages demand the kind of sustained parallel throughput that GPUs are built for.

Recommendation systems at companies like Netflix or Spotify run inference constantly, matching user behavior against learned patterns in milliseconds. Autonomous vehicles process sensor data from cameras and lidar simultaneously, requiring near-instant decisions. Natural language tools like large language models depend on GPU clusters to generate coherent responses fast enough to feel conversational.

Scientific Computing and Enterprise Workloads

Scientific applications are just as demanding. Drug discovery researchers at firms like Schrödinger use GPU-accelerated simulations to model how molecules interact, cutting years off traditional lab timelines. Weather forecasting agencies run atmospheric simulations across global grids, a task that would take days on CPU-only systems. Digital twins - virtual replicas of physical systems like factories or power grids - rely on real-time simulation to flag failures before they happen.

Cybersecurity analysts use GPU-accelerated tools to scan network traffic at scale, catching anomalies that would slip past slower systems. Cloud providers like AWS and Google Cloud now offer GPU instances precisely because so many enterprise workloads, from analytics to generative AI, require that processing power on demand.

Why Efficiency and Future Trends Make GPUs Even More Important

A possible fair criticism of GPU computing is its high power demand, with datacenter GPUs often consuming 300 to 700 watts for high-end ones, and AI training clusters with thousands and thousands of that generation of GPU humming through the power of small towns in an instant. It is a charge that cannot be ignored by someone in their right mind doing infrastructure planning.

The counter-argument, though, is the massive performance per watt provided by GPUs. "A GPU for workloads that can be fruitfully parallelised can take in a few minutes the following time for the CPU of a few hours" should be stated. Note that taken across all the jobs, the total energy consumed would very often bring in the GPUs at the forefront. Advances in AMD and NVIDIA architectures, with mixed-precision computing, could take the performance and efficiency even further by running certain calculations using lower-accuracy bit-depths. This decreases time and energy requirements. Smarter workload scheduling and liquid cooling systems in modern data centers have driven down the gap further.

Specialized Accelerators, Edge AI, and Integrated Architectures

Looking ahead, the trajectory is clear enough. Specialized accelerators designed for specific tasks, such as Google's TPUs for tensor operations, suggest a future where GPU-style parallel processing fragments into purpose-built silicon. CPU-GPU integration is tightening too, with chips like Apple's M-series blurring the line between the two. Edge AI is pushing inference workloads onto devices with strict power budgets, demanding ever more efficient parallel compute at small scale.

Simulation environments are growing in complexity, real-time rendering expectations are rising, and data volumes show no sign of shrinking. There's no denying that the demand for scalable, parallel compute will only intensify as software catches up to what hardware already makes possible.

GPU Power Is Becoming Core to Modern Progress

The continuous development of specialized hardware rapidly diminishing the relevance of everyday necessities is undeniable. A CPU handles tasks mostly one step at a time; hence, it is specialized for handling sequential, logic-heavy tasks, while a GPU is designed for handling large numbers of smaller parallel operations instead. Indeed, this is often the most-preferred tool for rendering, AI training, scientific simulation, and real-time data processing. This distinction distinguishes CPU from GPU without further ado than ever before.

A video editor rendering 8K footage or a pharmaceutical researcher modeling protein interactions or a game studio creating photorealistic environments or a bank performing fraud detection across millions of transactions per second-all these tasks are only possible through GPU computing that can't be achieved at that scale or speed otherwise. The real challenge remains balancing performance vs. energy consumption, mainly as data centers continue to scale to meet demand, resulting in the industry introducing new architectures that are more capable in terms of performance per watt. Please understand that parallel computing will increasingly dominate the ways we build software, conduct science, and create. The next wave of computing will not be defined by some particular breakthrough, but by the increasing envelope of practicable functionality that GPUs enable.